Guide to Google Manual Actions and How to Fix Them

Since the first search engine was born in the ’90s, people who would try to exploit search algorithms and find the easy path to the top of the search results were also born. This has become problematic for search engines trying to provide users with the best results but spam websites would come out on top.

As a result, Google made improvements on its algorithm so that it can better evaluate websites for shady tactics and easily detect spam. And to avoid these websites from ruining the users’ experience, Google started slapping spam websites with manual actions.

Imagine going to work one morning, you pull up Google Analytics and your rank monitoring tool and everything is zero. You sip some coffee and started investigating. Maybe there’s just something wrong with the tracking code right? But there’s nothing wrong with it.

Then it hit you. You realized you’ve been penalized. In a blink of an eye, months or years’ worth of work is lost.

Manual actions can drive SEOs crazy. If there is one thing you must avoid in this industry, it’s this one.

If you’re reading this article, I hope you’re here because you want to know more about manual actions and not because you’ve been hit by one. Let’s get right into it!

What is a Manual Action?

A manual action or penalty is given out by Google to websites that use unethical practices that are against their guidelines. These strategies are better known as Black Hat SEO and it seeks to manipulate search engine results.

To determine if a website deserves a manual action, Google employs thousands of human reviewers to check if websites comply with Google’s Webmaster Quality Guidelines.

What happens when I receive a Manual Action?

Once you receive a penalty from Google, pages that are affected or even the whole website will experience a huge drop in search rankings or completely be gone from the search results. Organic traffic will completely be gone as well. The drop is quick and could happen in a few days or even overnight.

How to Check for a Manual Action?

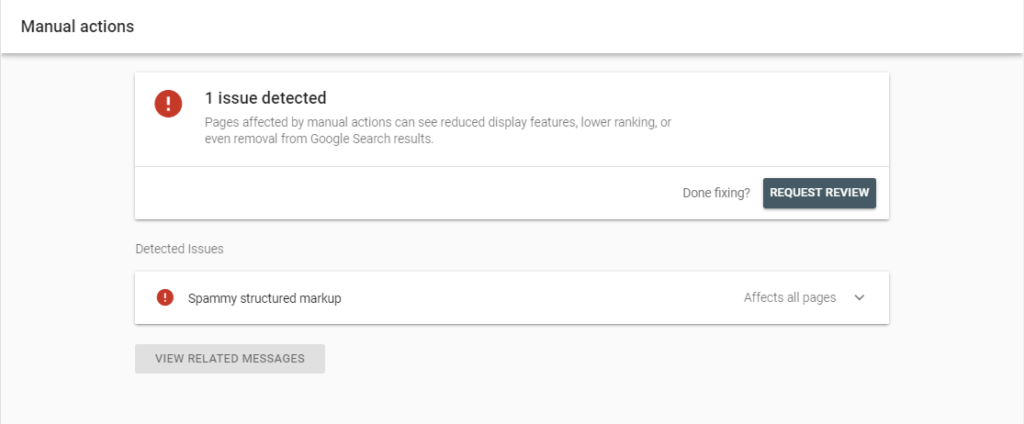

If you are given a manual action, you will be notified by Google through the Manual Action report in Google Search Console. You will see a message similar to this:

In the report, you will see the type of issues Google was able to detect from your website and the list of pages that are affected. If you have submitted reconsideration requests, you will also be notified here if Google rejected your requests.

List of Manual Actions

Below is the list of manual actions given by Google and tips on how to fix or avoid them.

User-Generated Spam

Websites that allow user-generated content such as forums and blog comments may receive this manual action if users bombard the website with self-promoting text and links to unrelated and spammy websites.

Fix

Use the “site:” search query to check your website for malicious content from users and delete them. Sample: [site:https://samplesite.com viagra]. Also, it is better to avoid this by making sure all content from users is being moderated before being approved.

Spammy Free Host

Opting for free hosting services are good for cutting costs but it could lead to a manual action. If you’re using a free hosting service, you’re website shares the same server with other websites. Even if your website is clean, spammers that you are sharing servers with might affect the whole server and Google may issue a manual action to all websites in that server.

Fix

Contact the technical support of the hosting company you are using and inform them of the manual action. If there are no developments, I suggest that you move to a secure hosting provider.

Structured Data Issue

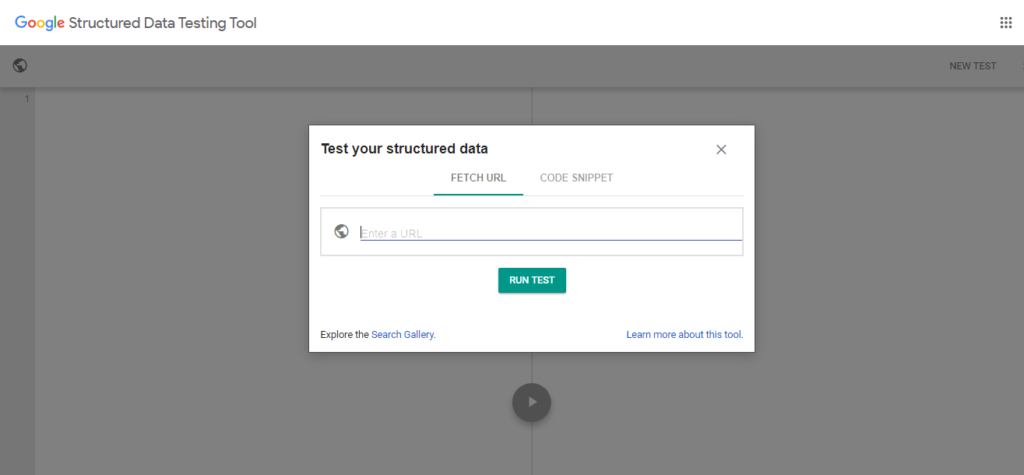

Google can detect if the implementation of structured data is against the Structured Data Guidelines.

Fix

Update the existing structured data markup on your website and comply with the guidelines. You could use the Structured Data Testing tool to avoid errors.

Unnatural links to your site

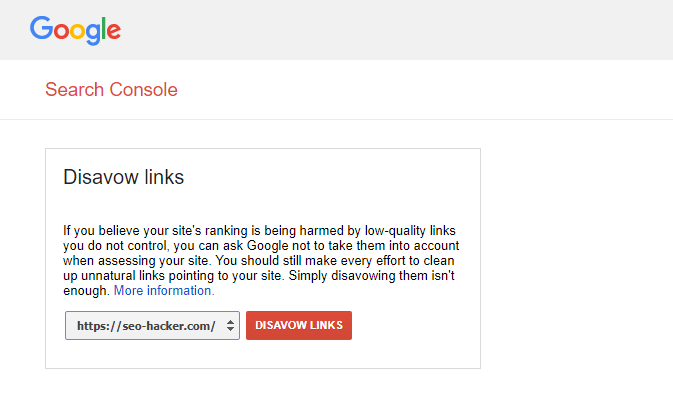

Receiving this manual action means that Google detected unusual linking patterns to your website that intends to manipulate PageRank. Actions such as buying links from PBNs and excessive link exchanges can lead to a penalty.

Fix

Go to Google Search Console’s link report to check for links that Google might find suspicious.Prepare a text file and use the disavow tool.

Unnatural links from your site

There is an unnatural pattern of outbound links from your website that might give Google the impression that you are selling links or participating in link schemes.

Fix

Identify links that are paid or affiliate links by using the rel=”sponsored” tag.

Thin content with little or no added value

Google has strict guidelines when it comes to the quality of content. According to Google, samples of pages with thin content or has no value are :

- Automatically generated content

- Thin affiliate pages

- Scraped content

- Low-quality guest posts

- Doorway pages

Fix

Review if these pages can be improved and make sure these pages can provide value to users. Alternatively, you can block Google from crawling these pages through a robots.txt file or putting a noindex tag to remove it from the search results.

Cloaking and/or sneaky redirects

Cloaking is the act of manipulating the code of a page to show users a different page from what was submitted to Google while sneaky redirects lure users to be directed to a page they don’t intend to go.

Fix

Use Google Search Console’s URL Inspection tool to check how Google sees the pages that are affected. Fix the codes and content of the pages and remove any shady redirects.

Pure spam

Google detected pages on your website that are using spam techniques blatantly.

Fix

Make sure that all pages on your website adhere to Google’s Webmaster Guidelines.

Cloaked images

Similar to cloaking earlier, Google serves manual action for cloaked images if it crawls an image on a page but it is shown differently to users.

Fix

Make sure that your website presents the exact same images to users as to what is seen in the Google search results.

Hidden text and/or keyword stuffing

Excessively repeating a keyword that you are targeting in a single page and/or hiding it by covering it with an image or making the text color white so it blends with the background is a black hat SEO strategy.

Fix

Check the HTML codes of the affected pages as well as the CSS styling for hidden texts and excessive repetition of keywords in the meta tags.

AMP content mismatch

AMP or accelerated mobile pages is a lighter version of a webpage for mobile users. The content of the AMP version of a webpage should have the same content.

Fix

Make sure that the AMP page is canonicalized to the correct web page. AMP content mismatch manual action will not gravely affect your rankings but Google will drop the AMP version for mobile search results and show the original webpage instead.

Sneaky mobile redirects

Mobile users may see a different version of a webpage if its a feature that is specifically for mobile users. However, there are instances where mobile users are redirected to a different URL either through code or a script that forces mobile users to open an ad.

Fix

If you have been given this manual action and you did not place it there intentionally, check if your website is hacked or scan for malware.

What to Do After Fixing Manual Actions?

After ensuring that you fixed the problems causing the manual action, you should submit a reconsideration request to Google in the Manual Action report.

In writing a reconsideration request, be as detailed as possible on how you fixed the error. If your website has been hacked, make sure that you also let Google know about it. Regardless of the gravity of issues, reconsideration requests may take a few days or weeks. Google will inform you through email if the review is complete and you will be informed if the fix has been accepted or rejected.

Best Practices when Fixing Manual Actions

Fix all issues on all pages before requesting for a reconsideration

Since receiving a manual action will result in lost rankings and traffic, some webmasters would apply half done fixes to start the review and regain their traffic as early as possible. It is best that you be patient and make sure your website is clean from any issues that resulted in a penalty.

Make the pages accessible

Make sure that the pages affected by the manual action are not blocked from crawling through the robots.txt.

Do not send reconsideration requests repeatedly

Repeatedly sending reconsideration requests will not make the review process any faster and might just result in Google seeing you as spamming

How Long Before My Website Can Recover?

There is no way to tell how long before your website can regain its rankings and traffic. It may depend on the gravity of the penalty. If it’s just a few pages on your website, it might take a few weeks or a month. If it’s on your whole website, it can take months or maybe years. Once you fix a manual action, Google will not give you a timeline.

What you should know is that when a webpage or website gets penalized by Google, it loses all trust and authority. You’ll be starting fresh and you need to work your way up again.

How do I Avoid Manual Actions?

It’s pretty simple, do White Hat SEO. It is the right way of doing SEO and it should be the only way you are doing it.

Create a holistic optimization plan for your website. Make sure you have a good user interface and user experience, optimize for mobile, and publish great content that attracts links. You have more than 200 ranking factors to work with and every day you should start looking into at least one of these factors and ask yourself – “what can I optimize today?”.

You’ll be needing a lot of patience but continuously improving your website the right way is 100% better than trying to recover from a manual action.